Where to Begin With Agentic AI

By Bhavesh Amin

Updated on 23 February 2026

There is some debate around whether we’re in an AI bubble right now. While some of these models and AI providers will disappear into the void, taking a large chunk of the environment with them, there are benefits that agentic AI brings. So, where to begin?

The moon on a stick

There is considerable excitement about what agentic AI can do for companies, and CEOs may expect an immediate return on investment. The reality is that replicating complete processes with agents will take time and come with lessons. There is likely to be a trade-off between quality and time, as for many processes, time is the resource that you want to free. Also, if your company already invests heavily in automation, the benefits of agentic systems may not be as significant compared to companies that have not.

Another significant factor that can cause a huge gap between expectations and delivery is security. There are significant security risks to consider, including prompt injection and the exposure of your credentials to third parties through tools and agents, as well as data protection breaches and agents executing commands beyond their intended scope. Prompt injection occurs when malicious instructions embedded in user input or external data trick the LLM into bypassing its original instructions, which, in worst-case scenarios, can lead to bad actors accessing your systems via tool-enabled agents. Data protection breaches can occur if customer data is exposed to third-party APIs or malicious tools that harvest your data via the agent.

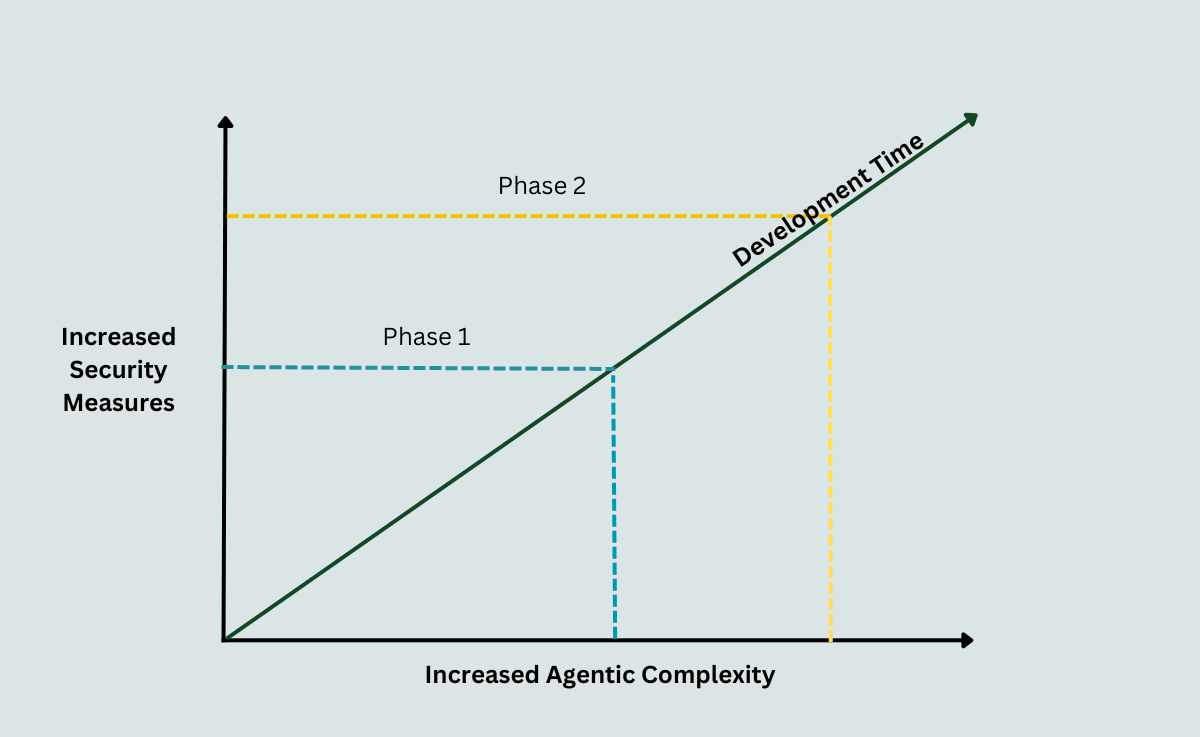

To mitigate some of these risks, your company's security policy should apply to your agentic workflows, which may mean users having to type in verification codes at various steps, IT Security signing off on third-party tools and integrations, applying least-privilege access to agents, and ensuring that no company data is exposed to open systems. Furthermore, you should create an agentic AI security framework and best practices document to cover agentic AI-specific risks. Borrowing from Jack Barker’s (of Silicon Valley fame, and yes, I did rewatch Silicon Valley recently) conjoined triangle of success, you can think about the balance between security and agentic workflow complexity:

Figure 1: Balance between security measures versus increase in AI engineering complexity

For example, Phase 1 could represent using agents and tools to analyse market data, and Phase 2 could incorporate tools and agents for your company data, which will require an additional layer of security. Keep in mind that there will be some tools or agentic AI systems that your IT Security or cybersecurity team won’t sign off on.

In short, manage expectations. Don’t promise the world and deliver a chatbot that has to pass on queries to a human for every customer query it deals with.

Put on your product manager’s hat

Create a table of all the potential jobs agents could do for internal and external customers, split into quick wins, medium-term, and long-term projects. The medium- and long-term projects can be broken into sprints later on. Estimate the time to build, a security risk score, and the potential value per annum (you may have to make some crude assumptions from discussions with various stakeholders) for each job. You should also make an assumption on the failure rate and how that will increase the cost of the workflow. This is not just the increased cost of LLM calls, but also the additional time a human will have to intervene in the process.

If at this stage, you can easily break down the time to build and value gained for smaller parts of complex workflows, then that will help with the decision-making process. For example, part of an email marketing process may involve creating the lists to send the email to, while a separate process is updating the email content from a folder containing the email copy and images.

Figure 2. An example of a table of high-level tasks and various scores

This table shows the first step in identifying the projects to focus on first. While quick wins seem like a good place to start, if they only add incremental value to your business, do they need a project team and developers working on it? In fact, some quick wins only using low-risk external APIs and data could be passed on to the relevant departments to work on. For example, if you have a process that produces regular market reports on competitor products, taking information from various websites or external tools and creating a report on the findings, then this could be a simple project for the marketing team to work on. The risk of privacy or security breaches should be comparatively low (provided you're not sending sensitive data to APIs or giving tools access to your filesystems), as long as IT Security signs off on their workflows and the tools used.

It provides different departments with the opportunity to embrace agentic AI. Finding (and setting up) the right tool for the team to use based on their skillset and the process they want to create would be the main involvement from developers. There are no-code tools like Make, Zapier, and n8n available to users that might be capable of replicating simple processes these departments require. Analysing the quick-win workflows that could be passed on to individual departments can help narrow down the best tools to invest in.

As I mentioned in a previous article, there is a benefit to starting with internal customers compared to external customers, as they will be more forgiving if mistakes happen. Any issues will not impact your brand compared to external customers. Also, scoring each task in terms of privacy and security will assist the decision-making process, as there will be a lot of testing involved.

Frameworks

As mentioned in the previous section, the worker skillsets and the tasks you want to create will help with the frameworks to choose. CrewAI offers simple abstraction with role-based agents that can be faster to prototype, or LangGraph might work better because you can map your processes to its graph-like structure of nodes and edges. It also has a robust persistence layer (this means if a long-running agentic process fails halfway through, it can resume from where it left off), which can lead to large savings. You may want to have different frameworks for different tasks, as there are frameworks designed for specific tasks, such as data analysis. Although the costs add up when using multiple frameworks, they might produce better results or allow you to create processes more quickly, which saves you time and money in the long run. That is why a cost-benefit analysis of the different options can help with your framework strategy. Work out the costs of each option, including cost per call, costs per user, and whether there are usage limits.

Another important consideration is observability and tracing what is going on behind the scenes. You need to understand what your agents are up to, what information is being passed between agents and between agents and tools, the outputs at each stage, the time taken and the costs of each call. While most frameworks will have their own tracing functionality, is it fit for purpose for your developer and project needs? The observability tool doesn’t have to be linked to the frameworks you use; you can go with an independent tool for observability if you feel it improves the team’s work and saves costs. While LangSmith is optimised for use with LangChain and LangGraph, it can be used with other frameworks. Conversely, you don’t have to use LangSmith if you’ve decided to go with LangGraph as your agentic framework.

North star and metrics

Setting clear and easy-to-measure metrics is important, as it is with any project. Time savings will be a key end goal for many of the projects. However, you need to identify these savings, such as FTE equivalent or less overtime, and ensure that you can monitor them. Include the cost for implementing the workflow, as well as the annual cost of running the new workflow. There will be specific metrics related to specific tasks, such as accuracy of results for machine learning classification or sales per campaign and average list size for email marketing, where there are rules of not sending the same customer more than one email within a twenty-four-hour period.

Other metrics to monitor will relate to the tracing of the agents, including cost per run/call, percentage of errors, human interventions, and times an evaluator agent (an AI agent used to check the results of a worker agent) wrongfully accepts incorrect outputs (false positives) or wrongfully rejects correct ones (false negatives).

Using these metrics will help calculate a reasonably accurate return on investment. The percentage of times where human intervention is unexpectedly required will be a key indicator. If an employee has to spend 50% of their day fixing an agent’s errors, when the same process would only take 30% of their day to complete manually, then there is no benefit to the new process. Once your teams start testing the workflows, the metrics from the test data (make sure you have a reasonable sample) can be used to recalculate the value per annum for the different use cases. You may find that some of these use cases may not be worth implementing based on the performance of the agent.

Final Thoughts

Hopefully, the ideas in this article can provide a springboard to strategising the best way forward with agentic AI for your company or team. Understanding your current processes will make it easier to prioritise what processes should be agentified (that surely should become a word soon). Researching the available tools and frameworks will help you see results more quickly.